In this age of Big Data, it’s easier than ever to develop campaigns that resonate with your audience and target them directly. While it was once a queasy notion that advertisers have so much data on everyone, by now, most consumers accept—and even expect—personalization throughout the customer experience...

...as long as they feel like they can trust you.

As personalization and data-driven types of advertising become commonplace, people are not just more ad-literate, but more cognizant of the tracking and targeting techniques behind them.

Many embrace it—as long as they can benefit from it. In fact, Accenture found that 58% of people “would switch half or more of their spending to a provider that excels at personalizing experiences without compromising trust.”

Even more interestingly, some drop off the radar because of a lack of personalization:

This level of awareness shapes how people behave on the internet and in real life. Not everyone goes completely off the grid or browses the internet behind a VPN, of course, but it can affect what brands they engage with and how. And it can definitely influence who they decide to give their money to.

The fact is, people have always made purchasing decisions based on how much they trust a given brand. This is just a new part of that calculus.

Excellent personalization these days can mean a lot of things, what with predictive analytics, dynamic user experiences, targeted marketing campaigns, and more now thrown in the mix. Earning trust seems comparatively simple, doesn’t it?

Still, there are a few different aspects to consider when it comes to embracing data in a way that doesn’t make you lose that trust.

* * *

Stay Aligned With Your Values

First of all, it’s important set your brand values at the start and stick to them. Those values should inform (among many other things) how you use data and where you serve your ads.

Digital advertising can feel very private since it largely takes place on a single user’s desktop or mobile device, but that doesn’t give you license to engage in shady or manipulative campaigns based on private information you have on them.

Imagine how you might approach the same campaign if the targeting tactics you used were, say, splayed on a huge billboard downtown for everyone to see. Obvious manipulation wouldn’t sit well with passersby; people could easily call you out for shenanigans, so you’d keep everything above board, right?

If your marketing is in line with your values across the board, there shouldn’t be a problem.

* * *

Remember Your Reputation is on the Line

Using data responsibly is not just a matter of doing the right thing. Using data unethically or recklessly can also have major repercussions for your reputation and your company as a whole.

You want examples? Hoo boy, where do we even begin?

- Uber faced a litany of bad press and lawsuits after being busted for its “God View” feature that allowed company employees to effectively spy on users by tracking their location.

- For a recent data breach that exposed 30 million users’ private data to hackers, Facebook could be liable for a $1.63 billion fine under the GDPR.

- Speaking of Facebook, not only did Cambridge Analytica dissolve after being caught misusing Facebook user data, but its employees could be facing criminal charges.

- YouTube stars have been accused of taking advantage of followers struggling with mental health issues, referring them to a dubious online counseling service that compensates them for how many patients they refer.

- Even though Google eventually banned ads for payday lending, loan companies have found ways around the ban.

- Advertisers have used demographic targeting to get around ageism laws in hiring and racial discrimination laws in housing.

We could go on, but you get the point. There are simply not enough laws to protect vulnerable people, and the ones that exist are inconsistently enforced, so it is all the more important for advertisers to behave humanely.

Don’t be a jerk, basically.

* * *

Don’t Forget the Humans on the Other Side

Entire campaigns can easily run afoul of your moral code before they break any laws. Don’t forget that individual ads are served to real people. You are a human being advertising to other human beings.

Have you ever been served an ad that was just a little too targeted? It knows your name or your location, or where you ate lunch, or the shortcut you took home, or the kettle you picked up in the store and put down because it seemed too gaudy.

Do you want to buy from a brand that seems like it’s stalking you like that?

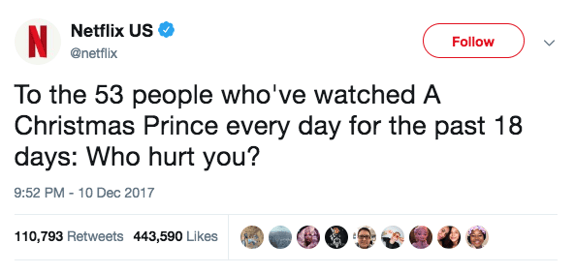

Or how about when Netflix tweeted a week before Christmas last year:

It was a cheeky joke that didn’t name any individual users, but it was a reminder of the depth of data Netflix employees can access about their users. (Spoiler alert: It didn’t go over well with a lot of people.)

People generally feel like ads have become too intrusive, so be wary of creeping anyone out.

* * *

Be Discerning with Where You Place Your Ads

Context is important to how trustworthy customers think you are, too. It’s not just the ads themselves, but where they live.

Boycotts of companies, for example, often persist (aided by social media) until those companies pull their ads from objectionable sites. One such campaign, against ultra-conservative media outlet Breitbart encouraged more than 1,000 advertisers to pull their ads from the site.

Do we think that those 1,000 advertisers purposefully placed their ads there to begin with? Probably not. But with the rise of time-saving technology like programmatic display and video, it’s easy to “set it and forget it” and then have your brand splattered somewhere where you wouldn’t want it simply because a computer has no sense of right and wrong.

There is obviously a need for human decision-making throughout these processes, so where should big data end and normal-sized humans begin?

It’s simple: Let the data describe, and let people prescribe.

Data can tell you all about how the world is, but you need a person to make decisions about how it should be.

* * *

So What Does It All Mean?

Obviously, there are great benefits to the overabundance of data out there these days, both from a customer’s perspective and an advertiser’s perspective.

But just like great power, with great data comes great responsibility. At the end of the day, as marketers, we need to let our human minds and consciences prevail.

On the low-stakes end of things, manipulating customer data can make your brand look Orwellian and untrustworthy. And on the other end, the overreliance on and abuse of data and AI can actually create feedback loops, reinforce biases, and promote discrimination—not to mention land you in legal hot water.

There are plenty of aspects that can be automated or algorithmized, but an actual living, breathing human being still needs to keep an eye on things to guide the process and make final decisions, especially on the creative side of things.

No matter how much data you have, it’s ultimately just a tool. Better saws and hammers can help a carpenter make a better cabinet, but that doesn’t mean the carpenter can take the day off.

.png?width=250&height=153&name=CSI-OverskiesRebrand_LOGO-01(smaller).png)

.png?width=100&height=61&name=CSI-OverskiesRebrand_LOGO-01(smaller).png)

.png?width=88&name=CSI-OverskiesRebrand_LOGO-01(smaller).png)